Last week you might have seen a tweet about a day in the life of a Data Platform Consultant. To say the least, my days are varied.This day, in particular, I was split between building out automated ETL tests using Biml and spinning up a new Azure Data Lake. Up until recently, I would have thought of those two as unrelated, but something very interesting has come from that day. I’m starting to see that all this buzz around big data and newer technologies like Hadoop aren’t as new as you think.

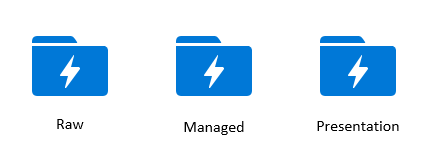

Turns out there are three basic zones or areas to a data lake. Raw, Managed, and Presentation.

The raw zone should be optimized for fast storage. The goal is to get the data in as quickly as possible. Don’t make any changes to this data. You want it stored as close to the original format as possible. It sounds just like staged data to me. Data you’d build an extract package to get from source to your staging environment, right?

Maybe you’re thinking this is just a coincidence…let’s move on.

The next zone is often called the standardized, or managed zone. This zone holds data that has been cleaned up. It could be that data has been standardized into a common format. If you have sources with multiple layouts, you pull out the shared or standardized data and store that here. You may even convert some data types, or perform some lookups to get the data ready for further analysis later. Sounds awfully similar to your transform schema between your stage and Operational Data Store (ODS) or Data Warehouse(DW), doesn’t it?

A little less coincidental, eh?

The final zone is your presentation zone. Here you want to store data ready for consumption by your users’ applications, like PowerBI or Excel. You want data in this zone to be as optimized as you can for specific needs your users have. Sounds like my Data Warehouse schema, or my tabular model in SSAS.

One final piece of the puzzle fell into place Friday when I was in Melissa Coates Data Lake session. When going through U-SQL Polybase and moving data from point A to point B Melissa shared the missing piece. U-SQL statements compile down to C# behind the scenes.

C# is what I use to enhance my Bimlscripts. C# is a language that uses Net libraries like Microsoft.SqlServer.Dts.Runtime one of the libraries in SSIS.

So that means the frameworks we’ve been using forever to move data from point A to point B aren’t different in Data Lake. We’re just changing the engine under the hood. We get some new features, some that will make things faster, some will make it more scalable, but still, the same frameworks hold true.

You don’t have to believe me just yet. I’m going to share notes I’ve been keeping on a current project. I’m going to prove it’s the same as before. And I”m even going to show how it’s possible to automate this development. Get ready for your Keanu moment.